Reimagining an Enterprise AI Assistant

Redesigning an enterprise AI assistant for employees to meet market parity, increasing adoption through structured, workflow driven interaction design.

Scope

AI · Systems · Mobile + Web

/

Client

Consulting

/

Duration

2 months

/

Year

2025

/

Challenge

(01)

Informative but not differentiated

The existing AI assistant produced accurate responses but lacked organisational context, regulatory depth, internal systems integration, differentiated UX and clear value add beyond generic AI tools

It sat in a quadrant of low adoption and limited perceived capability.

The goal was to reposition the assistant toward higher capability and higher adoption by rethinking its interaction model, not just its model performance.

/

Process

(02)

Research was key

1. Competitive benchmarking

I started off by conducting a structured audit of 56 AI products across industries, analysing output structuring models, transparency and citation patterns, embedded vs standalone assistant paradigms, agent based behaviours and iterative refinement controls.

This revealed a shift toward structured, inspectable, task oriented AI experiences.

2. Workflow observation and capability mapping

In parallel, I observed employee workflows to understand how research, drafting and review tasks were actually performed.

I mapped existing internal AI capabilities against these workflows to identify gaps in usability, redundant tools, opportunities for consolidation and integration constraints.

This reframed the assistant as a workflow layer rather than a chat interface.

3. Experience principles and Jobs-to-be-done

From research synthesis, I defined core principles:

Transparency over opacity

Assist, don’t override

Structured outputs by default

Progressive disclosure

Clear system status

I translated these into Jobs-to-be-Done tied to real tasks: research efficiently, draft with control, review for risk and summarise internal knowledge

4. Redesigning the assistant

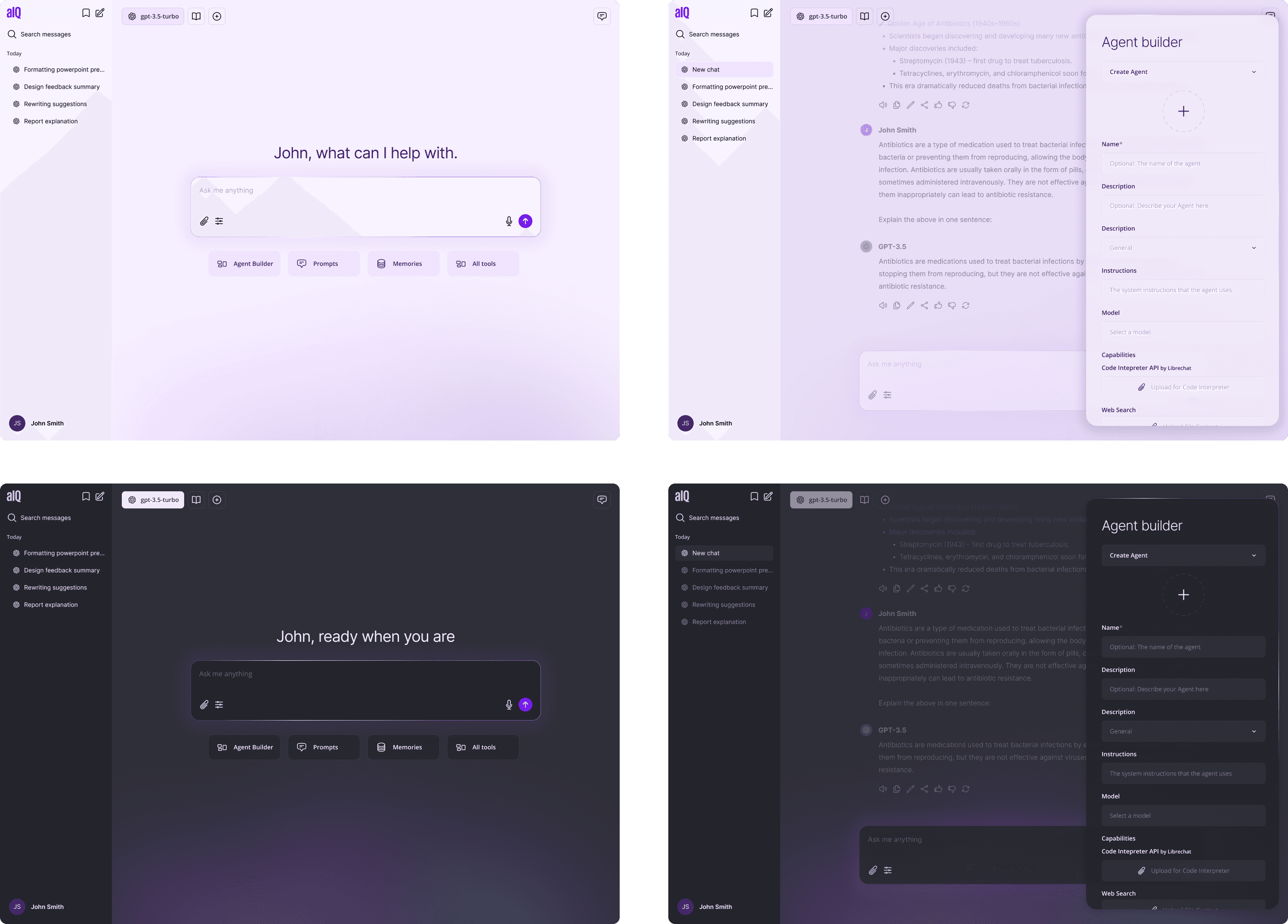

Using those principles and patterns, we redesigned the assistant around structured behavioural modes.

Guardian mode: ensuring outputs are accurate, explainable, traceable and compliant. Features include transparency tags, source references, risk surfacing.

Catalyst mode: accelerating research and client ready outputs. Features include structured output templates, context aware synthesis, inline affordances.

Navigator mode: streamlines routine tasks and project workflows. Features include context persistence, workflow linked prompts and task based entry points.

Nurture mode: supports wellbeing, culture and growth. Features include motivational framing, motivational framing and adaptive interaction tone.

5. Ideation and consolidation

I facilitated structured ideation sessions using How Might We's, Crazy 8s rapid exploration and dot voting.

We generated 11 early concepts spanning conversational overlays, embedded assistants, and multi agent orchestration.

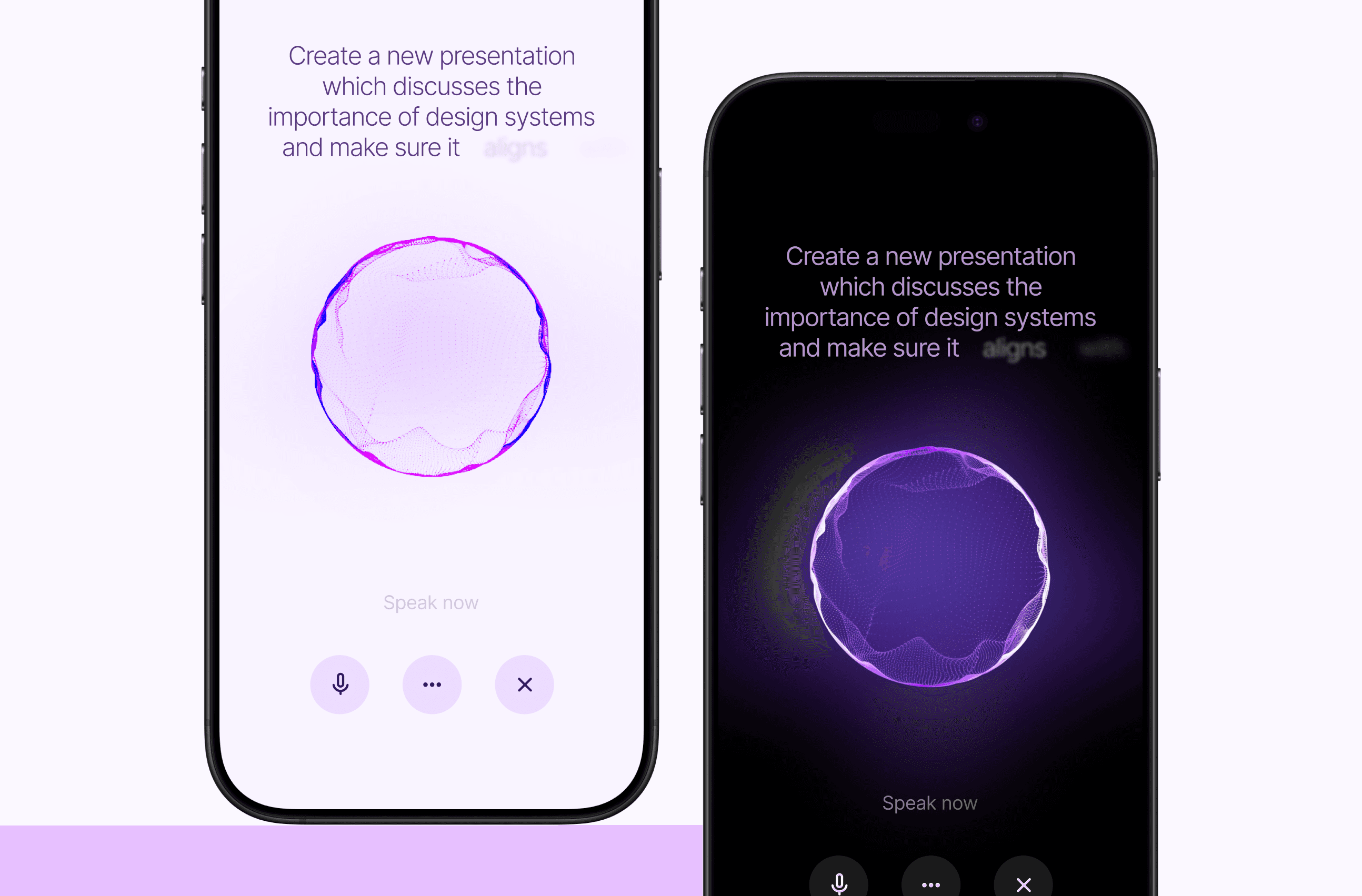

Following feature consolidation and feasibility alignment with engineering, we converged on a unified mobile first assistant framework.

6. Interaction design and prototyping

Working in focused design groups, we translated prioritised features into wireframes and refined them into high-fidelity mobile prototypes.

Design decisions accounted for model latency, output variability and hallucination risks.

/

Reflection

(03)

The biggest opportunity wasn’t redesigning the tool, but embedding it directly into everyday desktop workflows

It became clear that the value wasn’t in adding more functionality. It was in fitting seamlessly into how employees already work.